AI training data: Buyer’s guide for physical AI, robotics and autonomous systems

How to evaluate AI training data partners for physical AI, robotics, autonomous vehicles and world models.

What is AI training data?

AI training data for physical AI consists of sensor-based datasets, including camera, lidar, radar and other modalities, annotated to teach models how to perceive, interpret and act in real-world environments.

AI training data is the primary constraint separating physical AI systems that work in controlled environments from those that perform reliably in the real world. Controlled environments are tightly managed settings where conditions are predictable and simplified, so models only encounter a narrow range of scenarios. In the real world, conditions are variable, unpredictable and constantly changing, which creates a gap between training data and actual deployment environments. Success requires not just volume, but precision, cross-modal consistency and the quality of human judgment embedded in every annotation decision. For enterprise teams building autonomous vehicles, robotics programs or the newest generation of world models, selecting the right AI data services partner is one of the most consequential decisions in the development stack.

This guide explains how AI training data is collected, annotated and evaluated for physical AI systems. It provides a framework for selecting AI data partners based on capability, scale, quality systems and security across automotive advanced driver-assistance systems (ADAS), robotics and world models.

Why AI training data quality is the bottleneck for autonomous systems

Autonomous vehicles, robotics and physical AI systems depend on training data that reflects the complexity of the real world. Unlike large language models (LLMs), which draw on petabytes of text scraped from the web, physical AI systems must be trained on sensor data that does not exist in abundance. Robotics data collection and annotation are significantly behind text-based model training in scale and availability. Millions of hours of annotated, egocentric, multi-sensor data are required and these datasets do not yet exist at sufficient scale.

The distinction between how LLMs and physical AI systems are trained is critical for procurement teams to understand. LLMs improve by scaling both pre-training data across multiple content modalities, including text, images, audio and video, and post-training through supervised fine-tuning and reinforcement learning from human feedback. Physical AI systems improve by ingesting precisely annotated sensor data that reflects real-world tasks, actions and environmental context. The gap between a prototype system and a production-ready model most often traces back to data quality, diversity and annotation precision rather than model architecture.

"Pilots can be gold-plated with manual processes and hand-picked people. Pilots prove feasibility. Production-grade annotation operations work despite people. They prove repeatability. The gap between pilots and production is at-scale workforces and the discipline to enforce consistency," said Steve Nemzer, senior director, artificial intelligence research & innovation at TELUS Digital.

Automotive ADAS and autonomous vehicles: What enterprise AV teams need from a data partner

Automotive advanced driver-assistance systems (ADAS) and fully autonomous vehicles represent two distinct tiers of physical AI development, each with different data requirements. ADAS in production vehicles today rely on perception models trained on camera and radar data for lane keeping, collision avoidance and adaptive cruise control. Level 4 and Level 5 (L4/L5) autonomous driving programs add lidar, ultrasonic sensors and sensor fusion stacks that must perform at highway speeds in all weather conditions, across new geographies and in edge-case scenarios that are rare by definition.

The data requirements for L4+ autonomous driving programs are among the most demanding in the AI industry. An end-to-end field operations testing data partner must support the full value chain: route planning, data collection, sensor selection, annotation and transfer, all with traceability from raw sensor input to labeled training data.

Key evaluation criteria for autonomous vehicles (AV) programs include sensor-specific expertise across lidar point clouds, radar object detection and camera-lidar fusion; annotation accuracy at sub-pixel level; safety-critical quality assurance systems and regulatory traceability. Managed annotation services are often preferred for safety-critical applications because the cost of an annotation error is not a degraded user experience but a potential perception failure in a moving vehicle.

"Production-ready" in this context means service-level agreements tied to model performance, not just throughput. An AV data partner that delivers labeled data at volume without domain expertise in automotive sensor systems is delivering undifferentiated output.

For autonomous vehicle programs, AI training data partners must demonstrate sensor-specific expertise, safety-grade quality systems and traceability from raw data to labeled outputs.

Physical AI and robotics: The new data challenge

Physical AI systems are trained using data collected from real-world environments and are designed to interact with the physical world. The AI training data requirements for physical AI diverge from both LLM training and traditional computer vision in important ways.

Physical AI systems primarily require egocentric data, meaning data captured from the perspective of the device or agent itself rather than from a fixed observation point. They also incorporate third-person and external viewpoints to improve spatial awareness and contextual understanding. These systems require multi-sensor datasets that include not just visual information but force, torque, proximity and spatial context. They also require pre-training and post-training datasets that cover generalizable behaviors across diverse environments, not just task-specific labeled examples.

"Demand for large-scale training data and annotation services is growing fastest in the robotics and embodied AI space. The data collection and annotation requirements for robotics and world models require a significant shift from earlier LLM training approaches. There is no large, readily available corpus of pre-training data. Some researchers estimate that only a fraction of the required data exists today, meaning millions of hours of annotated egocentric, multi-sensor datasets will be needed," Nemzer explains.

The robotics data stack includes pre-training datasets covering visual-language-action (VLA) models and state-action-behavior data, post-training datasets for fine-tuning to specific environments and annotation tasks and simulation-to-real data pipelines that bridge the gap between synthetic training environments and real-world deployment.

Synthetic data plays a meaningful role in robotics and physical AI training but cannot replace real-world data entirely. In simulation environments like NVIDIA ISAAC-Sim, synthetic data is highly effective for training embodied AI systems. For edge-case scenarios that are important but occur too infrequently to be reliably collected at scale, synthetic data fills a critical gap. And in regulated industries where real-world data is too sensitive to use, synthetic data becomes the primary training source. But the long tail of real-world variability, including sensor artifacts and adversarial conditions, requires real-world data to anchor model training.

"The balance to strike is to use synthetic data to fill specific data gaps, while anchoring training on real-world data that grounds the model in the long tail of real-world variability. Synthetic data is less reliable for teaching models about the sensor artifacts or adversarial conditions they'll encounter in production," Nemzer says.

Multi-sensor data collection and labeling: From lidar and radar to camera fusion

Data collection is the first bottleneck in physical AI development. For robotics programs, this often means setting up labs with prototype devices, conducting field operations, collecting millions of hours of interaction and action data, and preparing that data for pre-training and post-training annotation. For AV programs, it means roadway and in-cabin operations, instrumented vehicles, route planning, geographic diversity and sensor calibration at scale.

Multi-sensor annotation is the defining technical challenge for AV and robotics data pipelines. A pedestrian identified in a lidar point cloud must correspond precisely to the same pedestrian in the camera frame and the radar return. This cross-modal consistency requires annotation platforms that natively support 3D bounding boxes, semantic segmentation, panoptic segmentation and temporal sequence labeling across fused sensor data. Scaling this kind of annotation without sacrificing consistency is where general-purpose labeling platforms reliably fall short.

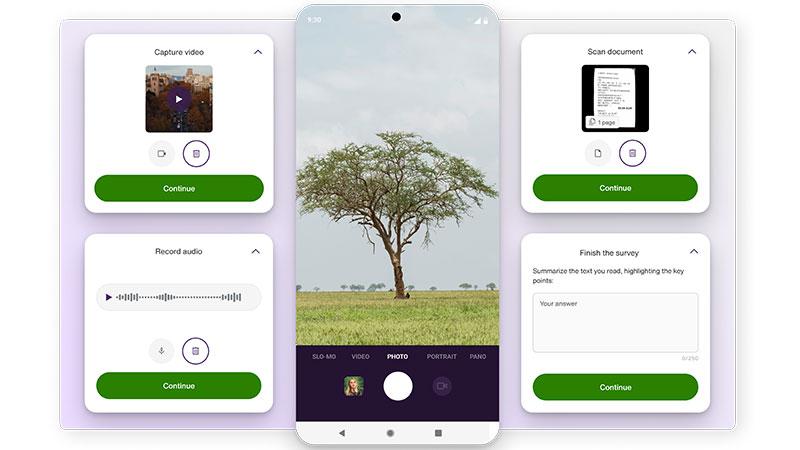

TELUS Digital's Ground Truth Studio platform was designed for this complexity, supporting camera-lidar fusion, 3D point cloud segmentation with compatibility across solid-state and flash lidar sensors, lane detection in 2D and 3D scenes and automated object interpolation and tracking for video annotation at scale.

Key requirement: Cross-modal consistency

Multi-sensor AI training data must maintain alignment across all sensor inputs. Objects must be labeled consistently across lidar, camera and radar streams to avoid model conflicts.

Human-in-the-loop at scale: Why automation alone cannot solve safety-critical annotation

Automated labeling improves throughput, but cannot fully replace human judgment in safety-critical AI applications. The long tail of real-world scenarios illustrates why. Rain, fog and dust degrade lidar data quality, creating noise that challenges automated labeling systems. Occluded objects, unusual road configurations and rare edge cases require human judgment to interpret correctly.

"Annotation automation hits a wall in those safety-critical edge cases where ambiguity is high. Interpreting the gesture of a crossing guard is far trickier than identifying a yield sign. The most effective annotation processes at scale do not attempt to eliminate human judgment entirely. Automated systems flag high-uncertainty cases using confidence thresholds and disagreement signals, and human-in-the-loop annotators resolve them using structured decision frameworks," Nemzer added.

Effective human-in-the-loop quality processes at enterprise scale concentrate human expertise where it matters most. Bottlenecks dissipate when humans validate only what automation gets wrong. Routing hard decisions to the right humans and feeding that expertise back to the model treats annotators as a scarce signal source rather than a labeling factory.

TELUS Digital maintains a global AI Community of more than 1 million trained annotators and linguists across six continents, delivering more than 2 billion labels annually. Quality management systems are designed for the traceability and audit requirements of safety-critical programs.

Data operations for autonomous systems: Managing complexity across the ML pipeline

AI training data pipelines for AV and robotics programs include ingestion, preprocessing, annotation, quality assurance, delivery and version control. These pipelines require coordination across data collection, labeling and validation workflows. Petabyte-scale datasets with geographic and temporal diversity require infrastructure, tooling and process discipline that many vendors are not equipped to provide.

"Many organizations think they have a tooling problem when they actually have a process problem. By the time they realize it, they've already hired too many people toiling in an inefficient workflow," Nemzer noted.

Data lineage and version control are not optional for safety-critical AI. Enterprise teams must be able to quickly answer three critical questions without extensive manual investigation: What exact data trained this model? What quality standards did it meet? And, why did it fail a specific case? If answering these questions requires digging through logs, the program is not ready for production.

Compliance and data governance requirements for safety-critical applications include ISO 27001, ISO 31700-1, HITRUST, PCI, GDPR, SOC 2 and TISAX certification, as well as secure facilities for sensitive data handling and audit trails for regulatory traceability. These are not background credentials for leading AV and robotics programs. They are active procurement requirements.

World models: Definitions, terminology and data requirements

World models are AI systems trained to build internal representations of how the physical world works. They represent a frontier area of physical AI research and are now central to autonomous driving, robotics and embodied AI development.

The AI training data needs for world models combine elements of AV and robotics data with additional requirements unique to generative modeling of physical environments. This includes pre-training datasets built from diverse real-world sensor captures, egocentric interaction datasets that capture how agents move through and affect their environments and simulation datasets that cover the edge cases and long-tail scenarios the model must be able to reason about.

Sim-to-real transfer, the challenge of making a model trained in simulation perform reliably in the real world, is a central problem in world model development. A microscopic surface variation creating friction can make the real world difficult for robots well-trained in simulation. The gap is bridged by combining simulation training with real-world data collection and human-in-the-loop annotation that captures the physical nuance simulation cannot replicate.

Evaluating AI training data vendors: What enterprise buyers should look for

The evaluation framework for AI data services partners in physical AI applications covers five dimensions: capability, scale, quality systems, domain expertise and security.

Capability means sensor-specific annotation expertise: Can this partner handle the full range of modalities your program requires, from lidar point clouds and radar object detection to camera-lidar fusion and temporal sequence labeling? Generic computer vision capability does not translate automatically to physical AI annotation.

Scale means annotator community size, geographic coverage, language coverage and the infrastructure to maintain consistency across a global workforce. TELUS Digital's AI Community includes more than 1 million trained data annotators and linguists across six continents, delivering billions of labels annually across computer vision and post-training workflows for AI systems.

Quality systems means the mechanisms through which accuracy is maintained at production scale: active learning, consensus annotation, multi-stage review and quality assurance workflows designed for safety-critical applications rather than consumer AI.

Domain expertise means annotators and technical leads who understand what they are labeling. A team that understands automotive sensor systems, robotics kinematics and the safety requirements of physical AI programs will produce better-calibrated training data than a generalist team working from written instructions.

Security means certifications and infrastructure that meet the compliance requirements of your program: ISO 27001 for information security, TISAX for automotive data, GDPR and CCPA/CPRA for privacy. It also means being able to answer basic chain-of-custody questions: Where was the training data sourced? Who has access to it? Who has touched it? What security protocols were in place?

Industry analyst evaluations provide one independent lens. TELUS Digital was named a Leader in Everest Group's inaugural PEAK Matrix Assessment for Data Annotation and Labeling Solutions for AI/ML in 2024, one of only five providers to earn the designation out of 19 evaluated. The assessment highlighted TELUS Digital's platform-first approach and its ability to handle complex use cases across different data types and modalities.

For programs with long-term data requirements, provider maturity and operational experience become important differentiators. Established vendors with long histories in data annotation often have more refined workflows, quality systems and institutional knowledge that newer entrants may take years to develop.

How to choose the right AI training data partner

Enterprise teams should match vendor capabilities to their specific use case:

- Autonomous vehicles: Require high-precision, multi-sensor annotation and regulatory traceability

- Robotics: Require egocentric data, behavioral annotation and real-world interaction datasets

- World models: Require large-scale, sequential datasets and sim-to-real transfer capabilities

Managed services are typically better suited for safety-critical applications, while platforms may be sufficient for non-critical or internal tooling use cases.

AI training data vendor evaluation checklist

- Capability: Supports lidar, radar, camera and sensor fusion annotation

- Scale: Global workforce and infrastructure for large datasets

- Quality systems: Multi-stage quality assurance (QA), consensus workflows, active learning

- Domain expertise: Experience in AV, robotics or physical AI systems

- Security: ISO 27001, SOC 2, TISAX, GDPR compliance

AI training data is the foundation of physical AI performance. As systems move from controlled environments into real-world deployment, data quality, diversity and annotation precision become the primary constraints on reliability and scale.

Enterprise teams evaluating AI data partners should prioritize domain expertise, quality systems and the ability to support complex, multi-sensor data pipelines across real-world environments.

To learn more about how TELUS Digital supports AI training data for autonomous systems, robotics and world models, explore our AI data services solutions.